All Categories

Featured

Table of Contents

Amazon currently usually asks interviewees to code in an online record documents. But this can differ; it can be on a physical whiteboard or an online one (Critical Thinking in Data Science Interview Questions). Contact your employer what it will be and exercise it a great deal. Since you understand what concerns to expect, allow's focus on how to prepare.

Below is our four-step prep prepare for Amazon data researcher candidates. If you're preparing for even more companies than just Amazon, after that check our basic information science meeting prep work overview. The majority of prospects stop working to do this. However before spending tens of hours preparing for an interview at Amazon, you need to take a while to see to it it's actually the right firm for you.

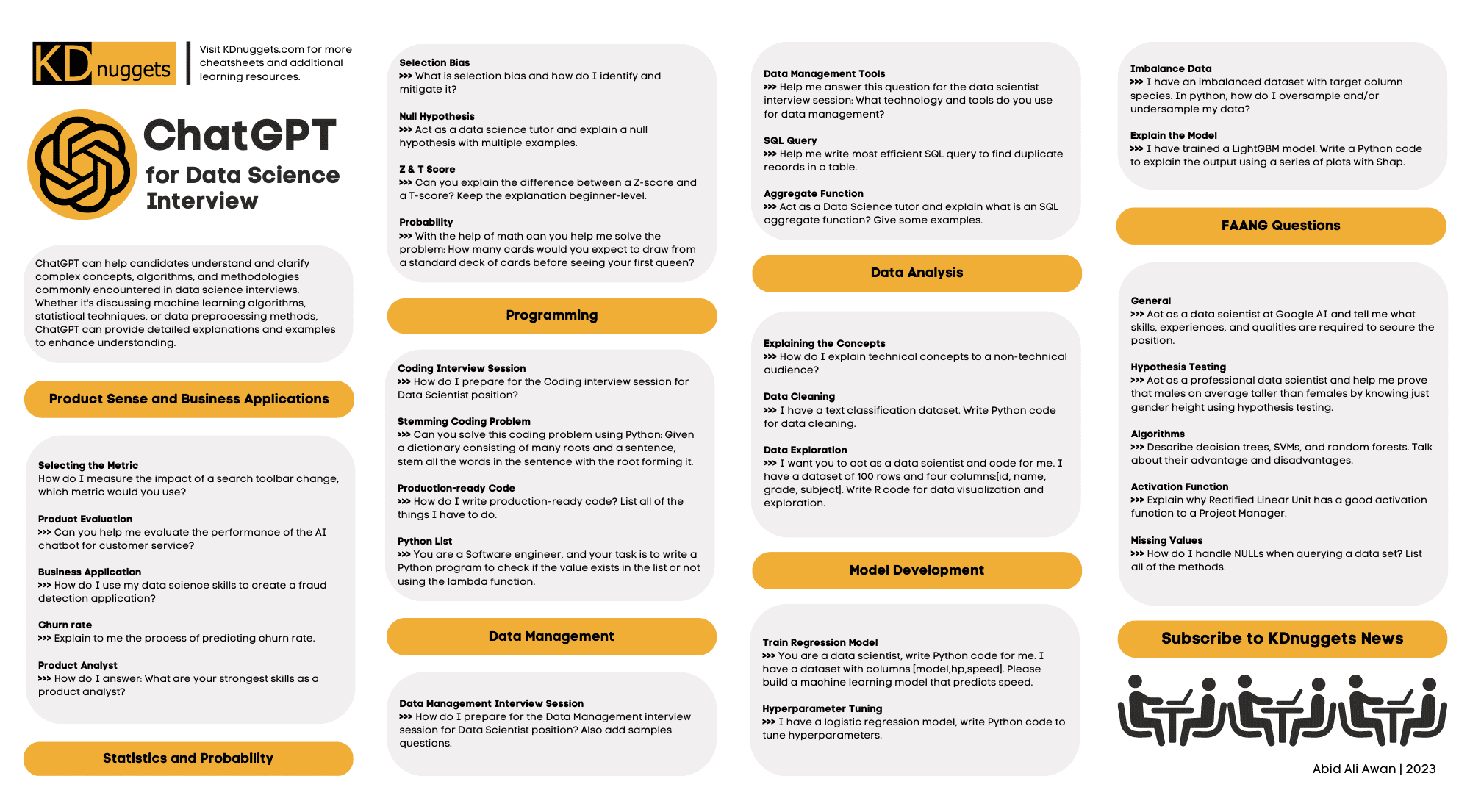

Practice the approach using instance questions such as those in area 2.1, or those about coding-heavy Amazon positions (e.g. Amazon software program development designer interview guide). Additionally, technique SQL and programs inquiries with medium and tough degree instances on LeetCode, HackerRank, or StrataScratch. Have a look at Amazon's technological subjects page, which, although it's developed around software program growth, need to offer you an idea of what they're keeping an eye out for.

Keep in mind that in the onsite rounds you'll likely need to code on a white boards without being able to implement it, so practice writing via problems theoretically. For equipment discovering and stats concerns, uses on the internet training courses developed around analytical chance and other valuable subjects, a few of which are totally free. Kaggle likewise uses cost-free courses around introductory and intermediate artificial intelligence, in addition to data cleaning, data visualization, SQL, and others.

Common Data Science Challenges In Interviews

See to it you have at the very least one story or example for each and every of the concepts, from a large range of settings and projects. Ultimately, a wonderful means to practice all of these various kinds of questions is to interview yourself out loud. This may sound weird, yet it will substantially enhance the method you communicate your responses during a meeting.

One of the primary difficulties of information researcher interviews at Amazon is connecting your different answers in a way that's simple to understand. As an outcome, we strongly advise practicing with a peer interviewing you.

Be advised, as you might come up against the adhering to problems It's tough to recognize if the responses you get is exact. They're not likely to have expert knowledge of meetings at your target business. On peer platforms, individuals commonly waste your time by disappointing up. For these reasons, numerous candidates miss peer simulated meetings and go straight to simulated meetings with a specialist.

How To Approach Statistical Problems In Interviews

That's an ROI of 100x!.

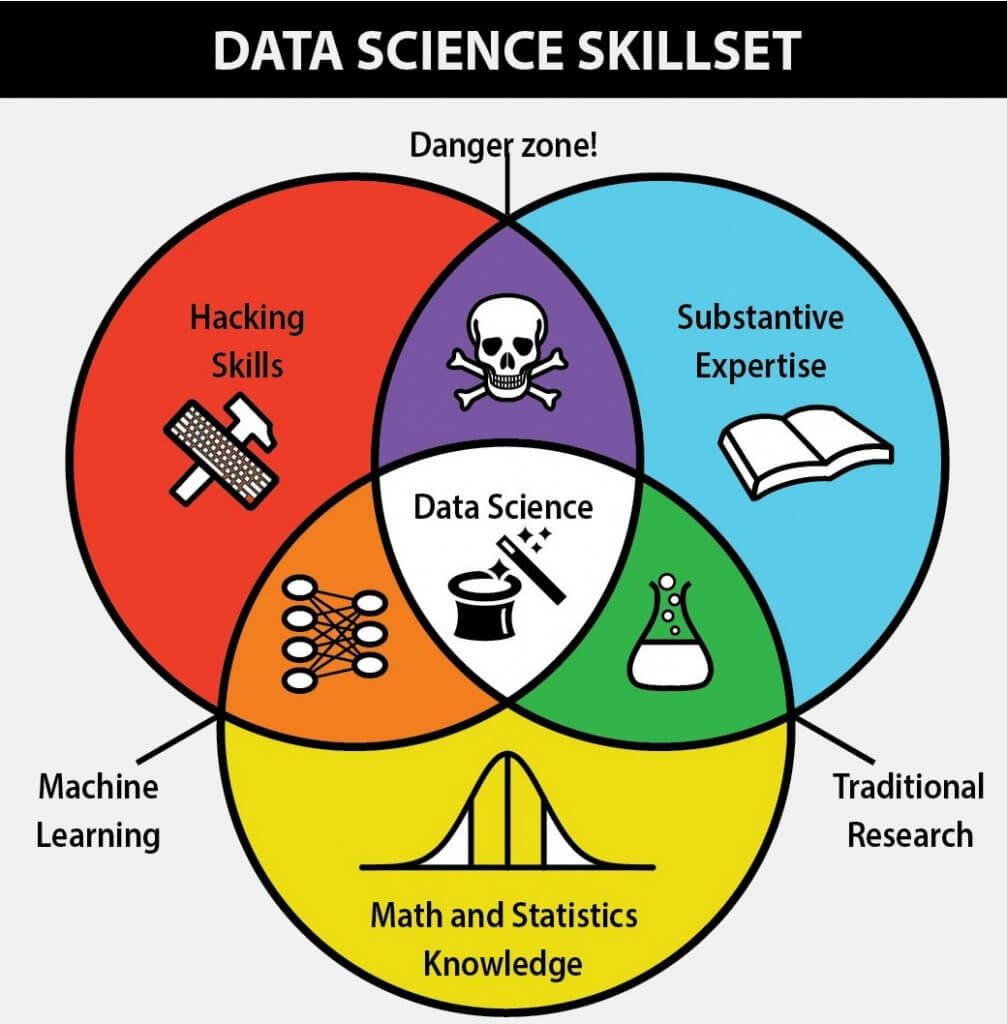

Commonly, Information Scientific research would focus on mathematics, computer system science and domain knowledge. While I will briefly cover some computer system scientific research basics, the mass of this blog will primarily cover the mathematical fundamentals one may either need to clean up on (or even take a whole course).

While I recognize the majority of you reviewing this are a lot more math heavy by nature, recognize the mass of data science (dare I say 80%+) is gathering, cleaning and processing data right into a helpful kind. Python and R are the most preferred ones in the Data Scientific research room. Nevertheless, I have actually likewise come throughout C/C++, Java and Scala.

Key Data Science Interview Questions For Faang

It is typical to see the bulk of the information scientists being in one of 2 camps: Mathematicians and Database Architects. If you are the 2nd one, the blog site will not help you much (YOU ARE ALREADY AWESOME!).

This may either be gathering sensing unit data, analyzing internet sites or accomplishing surveys. After collecting the data, it needs to be changed into a functional form (e.g. key-value store in JSON Lines documents). Once the data is accumulated and placed in a functional style, it is important to carry out some information top quality checks.

Comprehensive Guide To Data Science Interview Success

Nevertheless, in cases of fraudulence, it is really usual to have hefty course imbalance (e.g. just 2% of the dataset is actual scams). Such info is very important to determine on the ideal options for function design, modelling and design assessment. For more details, inspect my blog site on Fraud Detection Under Extreme Class Inequality.

In bivariate analysis, each attribute is contrasted to various other features in the dataset. Scatter matrices permit us to find surprise patterns such as- functions that need to be engineered with each other- functions that may require to be removed to stay clear of multicolinearityMulticollinearity is really a concern for numerous versions like straight regression and therefore requires to be taken treatment of accordingly.

In this section, we will explore some usual attribute engineering tactics. At times, the attribute by itself may not offer beneficial information. Picture utilizing net usage information. You will certainly have YouTube individuals going as high as Giga Bytes while Facebook Carrier customers make use of a number of Mega Bytes.

One more concern is the usage of categorical worths. While categorical values are usual in the information science globe, understand computer systems can just understand numbers. In order for the specific worths to make mathematical feeling, it needs to be transformed into something numerical. Typically for specific worths, it is common to do a One Hot Encoding.

Pramp Interview

Sometimes, having way too many sporadic measurements will certainly obstruct the efficiency of the design. For such situations (as generally carried out in image recognition), dimensionality reduction algorithms are made use of. A formula generally made use of for dimensionality reduction is Principal Elements Evaluation or PCA. Find out the auto mechanics of PCA as it is likewise among those subjects among!!! To learn more, have a look at Michael Galarnyk's blog site on PCA making use of Python.

The typical groups and their sub categories are explained in this area. Filter methods are usually utilized as a preprocessing step. The choice of functions is independent of any equipment learning formulas. Instead, features are selected on the basis of their scores in different statistical examinations for their connection with the outcome variable.

Typical techniques under this category are Pearson's Correlation, Linear Discriminant Evaluation, ANOVA and Chi-Square. In wrapper methods, we attempt to utilize a subset of functions and train a version using them. Based on the reasonings that we draw from the previous version, we make a decision to include or eliminate functions from your subset.

Using Pramp For Advanced Data Science Practice

Usual methods under this classification are Ahead Choice, Backward Removal and Recursive Feature Removal. LASSO and RIDGE are usual ones. The regularizations are given in the equations below as recommendation: Lasso: Ridge: That being stated, it is to recognize the mechanics behind LASSO and RIDGE for meetings.

Not being watched Knowing is when the tags are inaccessible. That being stated,!!! This mistake is sufficient for the interviewer to cancel the meeting. One more noob error individuals make is not normalizing the features before running the version.

. General rule. Direct and Logistic Regression are one of the most basic and typically made use of Artificial intelligence algorithms out there. Before doing any evaluation One common meeting slip individuals make is beginning their evaluation with an extra complicated design like Neural Network. No uncertainty, Neural Network is very exact. Standards are essential.

Latest Posts

Preparing For Your Full Loop Interview At Meta – What To Expect

How To Get A Software Engineer Job At Faang Without A Cs Degree

How To Handle Multiple Faang Job Offers – Tips For Candidates